2.4. Building blocks I: What makes the Universe habitable?#

Learning Objectives:

Basics of Big Bang Cosmology (scale factor changes with time)

The Fine Tuning of the Cosmological Constant and other parameters, and their role in enabling a life-permitting universe.

Definition of the statistical mechanical entropy (\(S = \log N\)) of a physical system.

Explanation of the Second Law in terms of a low entropy initial condition, and the conservation of the # of possible states. Explain why clumping of matter, and evolution of life, is compatible with the Second Law.

The goal of this lecture is to explain in very general terms some features of the Universe that are necessary in order for it to support life.

I. Our best theories of Nature#

Our two best experimentally verified theories of Nature are:

Einstein’s Theory of General Relativity (GR) (classical theory describing gravity in terms of curved spacetime)

The Standard Model of Particle Physics (SM) (quantum theory of all the particles and forces, besides gravity)

Nobody yet knows how to create a complete theory of “quantum gravity” that would combine both of these into a consistent overall mathematical framework. There exist various ideas (string theory, loop quantum gravity) that are speculative and not currently experimentally testable.

I.a. Standard Model#

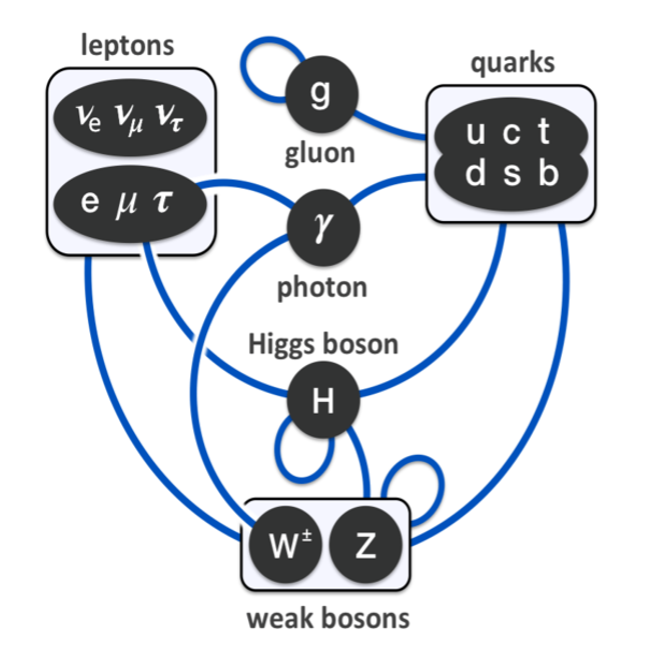

The SM describes the 3 forces besides gravity (strong, weak, electromagnetic) and the basic matter particles (quarks & leptons), and the Higgs field which gives all the other fundamental particles masses (except the photon, the carrier of the EM force, which remains massless).

Fig. 2.24 Standard Model particles (black) and interactions (shown in blue). Gravity not shown but interacts with everything.#

Interestingly, the matter particles all come in 3 “generations”, e.g. the electron has two big brothers called the muon and tauon, and the quarks and neutrinos also come in sets of 3. In each set of 3 you have a light one, a medium one, and a heavy one. But, only the lightest matter particles (the electron, up quark, and down quark) combine to form ordinary matter. The others are unstable and decay by the weak force.

In order to make predictions with the SM, you need to specify 25 different constants of Nature. These are things like strengths of forces, and masses of fundamental particles. For example, the strength of the electromagnetic force is determined by the fine-structure constant:

\(\alpha = \frac{e^2}{\hbar c} = 137.036\ldots\)

This is a dimensionless constant, meaning that there are no units. This is because (working in CGS units) the units in the electric charge (e) squared cancel when you divide by Planck’s constant hbar and the speed of light c. Only dimensionless constants really tell you something meaningful about Nature, since if a constant has units, its value can be changed by changing your system of units (a human convention).

There also has to exist at least one other kind of particle called dark matter, that interacts with gravity but not light, which is needed to explain the formation of galaxies. But we don’t know what its other properties are, so it isn’t part of the SM!

I.b General Relativity:#

The fundamental concept is that of a spacetime geometry, in which lengths and times are not determined a priori, but rather the geometry interacts with matter. The effect of matter on spacetime geometry is what we call gravity.

“Spacetime tells matter how to move; matter tells spacetime how to curve.”

—John Wheeler

In General Relativity there is only 1 important constant, related to the Cosmological Constant, which we will discuss later. (Newton’s constant G has units, so it doesn’t count.)

II. Big Bang Cosmology (BBC)#

In GR, to figure out the exact geometry of the Universe, you’d need to figure out the gravitational field of every bit of matter. Fortunately, the geometry of the Universe at very large, cosmological scales turns out to be extremely simple. According to the BBC model, the geometry of spacetime has the following features at large distance scales (billions of light years):

There exists a spacetime coordinate system (t, x, y, z) with respect to which matter is (on average) at rest.

At one moment of time t, the geometry of space is just normal “flat” 3-dimensional Euclidean space.

On average, the universe is homogeneous (the same at every place) and isotropic (the same in every direction).

However, it is not true that every time is the same. Instead, space is expanding with time. This means that any two galaxies at rest will get increasingly farther away as time passes. As this expansion is uniform, it has no “center”.

The distance between any two matter particles at rest is proportional to the scale factor a(t), an increasing function of t.

Written out as an equation, we have a “metric” which tells you the length separation ds between two infinitesimally nearby spacetime points:

\(ds^2 = -dt^2 + a(t)^2 [(dx)^2 + (dy)^2 + (dz)^2]\)

Don’t worry too much about the -dt^2 term, this just says that we are using coordinates where time proceeds at a uniform rate. (In Special Relativity, equations involving time squared always have a minus sign in front of them.)

The thing in square brackets is just the geometry of ordinary 3 dimensional Euclidean space—and although this particular use of infinitesimals might look unfamiliar, it really just encodes the ordinary Pythagoran theorem \(L^2 = (\Delta x)^2 + (\Delta y)^2 + (\Delta z)^2\). However there is a factor of a(t)^2 outside of this which tells you that this flat space is expanding with time, with a length that is proportional to the scale factor a(t), which is a function that increases with time. (While I won’t write this out, there is a differential equation for a(t) called the Friedman equation which lets you figure out how a(t) changes with time if you know the energy density and pressure of matter in the universe.)

II.a. Initial Singularity#

When t = 13.8 billion years ago, a(t) = 0. This is the “Big Bang singularity” where the universe had zero size! As far as we know, the Big Bang was the beginning of time (and hence the Universe). However, this should be taken with a grain of salt, as we would need to have a theory of quantum gravity to fully understand the nature of physics at very early times. Right now, we can only speak confidently about physics that took place starting a fraction of a second after the Big Bang. But, certainly the universe as we know it did not exist before this time.

II.b. Big Bang Nucelosyntheis#

At a bare minimum, the Big Bang model has to be correct once you get to a few minutes after the Big Bang when Big Bang Nucleosynthesis (BBN) occured, since it successfully predicts the ratios of light elements. There’s going to be another lecture about that so I won’t go into detail.

II.c Baryogenesis#

But, as a precondition for BBN, by the time you reach \(\sim 10^{-4}\) seconds after the BB, the universe has to have started out with a slight excess of matter (quarks, electrons) over antimatter (antiquarks, positrons). Otherwise it would have all turned into radiation (photons) with nothing left to make baryons, gas, stars, planets, people, etc. Since the quarks combine into protons and neutrons, collectively called baryons, the physical process which produced this net excess of matter—whatever it was—is called Baryogenesis. There are various ideas for how this happened, some of which, interestingly, use the fact that there have to be at least 3 generations of neutrinos in order to break the symmetry between matter and antimatter. But nobody knows for sure! (For those who wish to investigate more, there are 3 ``Sakharov conditions’’ that any solution to this problem needs to satisfy.)

II.d. Cosmological Constant#

Enough about early times, let’s go to late times. Around 1998, cosmologists realized that they could only make sense of their observations if there exists a new type of “dark energy” (not to be confused with dark matter) which causes the expansion of the universe to accelerate with time, so that for the past 5 billion years or so ago, we have:

\(\frac{d^2}{dt^2} a(t) > 0\)

Unlike normal matter which causes the expansion to decelerate (since normal gravity is attractive). To describe this we need one extra parameter called the Cosmological Constant, which tells you the energy density of empty space. In “Planck units” set by G, hbar, and c, it can be written as the dimensionless number:

\(\Lambda = 1.1056 \times 10^{-52}m^{-2} = 2.888 \times 10^{-122} L_p\)

where the Planck length \(L_p = \sqrt{\frac{\hbar G}{c^3}} \simeq 10^{-35}\,\mathrm{m}\) is the fundamental scale set by quantum gravity. For \(\Lambda > 0\) (empty space has positive energy, but also tension in the 3 space directions) the effect is antigravitational and the expansion accelerates.

III. Fine Tuning#

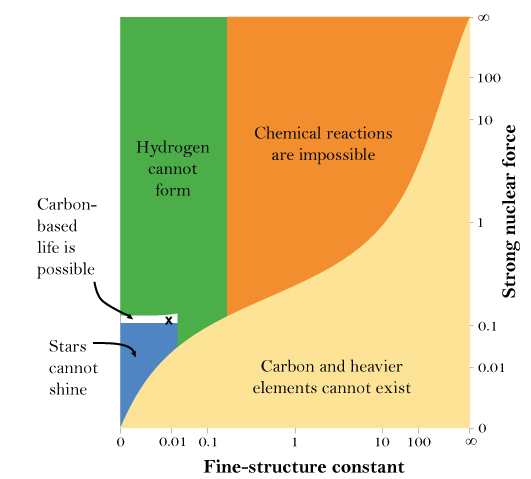

This is the observation that several of the 26 coupling constants in Nature have to lie within certain narrow ranges in order for life to be possible.

Most dramatically, there are lots of quantum effects in the Standard Model that contribute positively or negatively to the cosmological constant \(\Lambda\), making it so that we expect its value to be closer to 1 than to \(10^{122}\). But, if it were that big, the universe would have either recollapsed in about \(10^{-43}\,\mathrm{s}\) (if \(\Lambda < 0\)), or else anything bigger than \(10^{-35}\,\mathrm{m}\) would be ripped apart by the expansion of the universe (if \(\Lambda < 0\)). For galaxies to form, we need \(\Lambda\) not to be more than about 1000 times bigger than it is. So, a bunch of seemingly unrelated numbers had to cancel to about 120 decimal placess for life to be possible.

A similar problem afflicts the mass of the Higgs field, but that only requires about 30 decimal places to cancel.

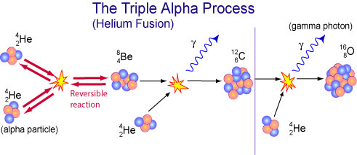

A couple of smaller (1% level) fine tunings seem to be necessary for things like stable nuclei, stellar formation, and the triple alpha process (described in another lecture).

Fig. 2.25 Strengths of EM force and Strong force plotted on log scale, white region is compatible with life as we know it.#

Fig. 2.26 Triple Alpha process : tuning required to ensure an energy level of Carbon-12 has almost the same energy at 3 alpha processes, enhancing what would otherwise be a rare process (Be-8 is highly unstable): [© Commonwealth Scientific and Industrial Research Organisation, 2015]#

III.a Philosophical Debates#

The mere existence of apparent fine-tuning is uncontroversial. But if you want to court controversy you can endorse one of the possible explanations:

There is some unknown, as yet to be discovered principle of physics which explains all this.

There is an enormous multiverse, across which the effective constants vary (string theory suggests this might be possible) and we live in one of the few nice universes (since the rest are uninhabitable).

God and/or some universe designer wanted to make a world with life.

Since this isn’t a philosophy class I won’t share my own preferences here, but this is an active area of discussion among philosophers of science.

IV. Thermodynamic History of the Universe#

Another big feature of the world we see is that the past is different from the future, due to the increase of entropy.

IV.a Definition of Entropy#

The entropy is just a count of the number N of possible states that a given physical system can be arranged in, while still looking the same macroscopically (i.e. microstates per macrostate). It is given by the Boltzmann formula:

\(S = k \log N\).

where \(k\) is the Boltzmann constant and the \(\log\) is just so if you have separate systems, S adds instead of multiplying. (The reason why N is finite is the Heisenberg Uncertainty Principle, which limits how accurately you can measure positions & momenta simultaneously.)

Things evolve in irreversable directions due to the Second Law of Thermodynamics, which says that entropy S is increasing with time:

\(dS/dt > 0\).

This is a remarkable law given that the microscopic laws of physics are (with tiny and unimportant exceptions involving the weak force) symmetric between past and future. They are also reversible, meaning you can evolve from the future to the past just as easily as from the past to the future.

IV.b Why the Second Law is True#

The Second Law follows because:

the laws of physics are reversible, and

the universe began in a very low entropy state (i.e. not in a randomly selected state)

Reversibility implies that the histories of two different possible microstates can never merge with each other—they remain distinct forever (although they may become observationally indistinguishable from a macroscopic perspective).

Now imagine you have a system B which is in one of 20 possible microstates (with equal probability), and then it evolves after a fixed time to a system G which has 100 possible microstates. That could potentially happen with total certainty, since 100 > 20. But if you instead start in a random G state and try to have it evolve to system B, this can happen with at most 1/5 probability, since of the 100 states in G, only 20 of them can turn into B. The rest have to go do something else!

Of course, for human sized systems, N isn’t some small value. If I have a mole of water, then there are Avagadro’s number \(\sim 6 \times 10^{23}\) water molecules and at standard temperature and pressure:

\(S/k = \log N \sim 5 \times 10^{24}\)

which means that \(N\) is around \(10^{10^{24}}\)… an enormous number! This means that the probability of the entropy decreasing by any amount that would be detectable in everyday life is so tiny it may as well be 0. So in practice, it is an iron law, even though conceptually there can be small downward fluctuations.

However, this argument only works because we assume the universe started out with low entropy, around the time of the “Big Bang” when \(a(t) = 0\). If you had picked your initial condition randomly, you would almost certainly be in whatever macrostate has the largest value of N, which is a thermal equilibrium state where nothing happens. Then you would get time-symmetric behavior, and life would not be possible.

IV.c. Open Systems.#

It is important to note that the Second Law only applies as stated to a closed system. If a system is coupled to an outside environment, there is the possibility for entropy to go elsewhere. For example, the Earth receives energy from the Sun in a low entropy state (visible light) and then radiates it away into space in a higher entropy form (infrared radiation).

So, there is no reason why the entropy of the Earth has to increase with time, and there is certainly no conflict between the Second Law and biological evolution!

IV.d. Clumping.#

In normal thermodynamic contexts like a box of gas, the highest entropy state tends to involve the gas being mixed uniformly through the whole box. Counterintutively, in our expanding universe, the matter started out in a thermal, uniform state and later becomes a more “clumpy” and “organized”. But the reason for this is that gravity is an attractive force. That means that as objects clump together, kinetic energy is released which can be used to increase entropy in other ways!

Suppose for example we have a bunch of particles interacting under the influence of gravity alone, with a Newtonian potential energy: \(V = -G Mm / r\), and the total energy is \(E = K + V\) where \(K\) is kinetic energy and \(V\) is potential energy. Then the ``virial theorem’’ says that in a bound system with some near-steady state behavior, the average of these energies should satisfy:

\(\langle K \rangle = - \langle V \rangle /2\),

(both sides are positive since \(V\) is negative). Because of the 1/2 on the right, the decrease of \(V\) is larger in absolute terms, so as \(V\) decreases, \(E\) decreases, but \(K\) increases.

So, if we have a region with more mass clumped together, it will have a larger negative potential energy, which means its constituents will tend to have more kinetic energy. But that means it is hotter, so energy will be transferred to less clumped, colder regions. But the \(E\) goes the same direction as \(V\), so this makes the clumped system even more tightly bound!

V. Conclusion#

So to sum up, for our universe to be hospitable, a few things had to happen including:

life-favoring values for various fundamental physical constants,

a low entropy (or special) initial condition,

the initial expansion of the universe,

production of an excess of matter over antimatter.

Several aspects of (1-4) touch on big questions which are at the boundaries of current scientific knowlege.

The rest of the lectures will mostly take this backdrop for granted, but it’s good to know a lot of stuff had to go right with cosmology as a whole, before we can discuss what had to happen in specific places for life to be possible.